Fairfield researchers are ethically applying AI to environmental challenges.

On a monitor in the School of Engineering and Computing’s Artificial Intelligence Lab, Nusrat Zahan MS’25 peers at a murky underwater image clouded by silt and shadow. As her lines of code run, the footage sharpens—revealing the vibrant reds, blues, and yellows of invasive fish species that threaten the fragile reef ecosystem.

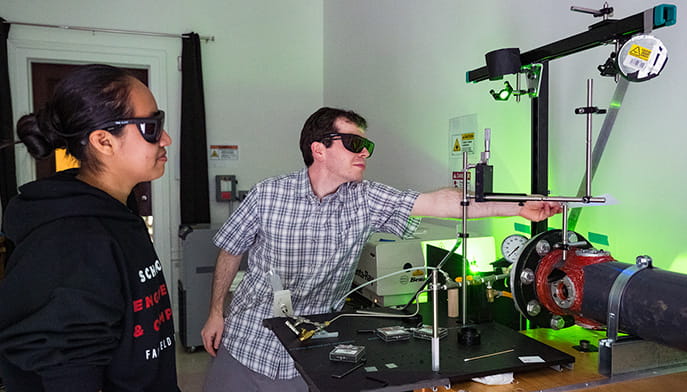

A few feet away, a graduate student is surrounded by glowing screens of geospatial and soil data. He is training a machine-learning model to help monitor geotechnical infrastructure—analyzing ground conditions to determine whether soil can safely support buildings and other foundations, particularly as extreme weather events become more frequent.

This kind of transformation—turning raw, unreadable data into actionable insights—is at the heart of the work underway in Fairfield’s AI Lab. Leading the charge is Sidike Paheding, PhD, associate professor and chair of the Computer Science Department. As an AI ethics researcher, Dr. Paheding teaches his students to tackle global environmental challenges by building AI models that account for bias and risk from the earliest stages of design.

“AI ethics sets the compass, establishing a solid foundation for developing future AI systems that are safe, secure, and trustworthy,” said Dr. Paheding, who serves as principal investigator on collaborative projects focused on advancing AI ethics education, funded by the National Science Foundation (NSF).

With support from the U.S. Geological Survey and under the mentorship of Dr. Paheding, Zahan’s cutting-edge underwater image enhancement techniques—particularly focused on illumination, correction, and color restoration—are powered by the in-house development of deep-learning models. Her research has been published in several international journals.

Deep learning—a subset of machine learning—draws inspiration from the structure of the human brain. The Fairfield researchers explained that deep learning models are trained through layered neural networks that ultimately recognize patterns and process complex data. Dr. Paheding and his students emphasized that deep learning models vary based on the problem being addressed and the data sets used to train them. Ultimately, the work is driven by the people behind the scenes who identify risk factors and design models intended to anticipate risk before it becomes a reality. “When developing AI models, there is no universal solution to these complex challenges,” Dr. Paheding said.